Your AI Has Instructions. Who’s Governing Them? (Part 2)

This article is a conclusion to the one published last week that introduced the need for governance of the instructions given to AI.

See Your AI Has Instructions. Who’s Governing Them?

For a long time AI Governance has focused on tools and organizational roles. These are important, but the “micro” level of what is actually driving AI solutions to behave the way they do has received relatively little attention. That has to change, and systems prompts that control AI behavior must be fully governed, and not thought of as technical artifacts left to technical staff to sort out.

Why This Matters Now

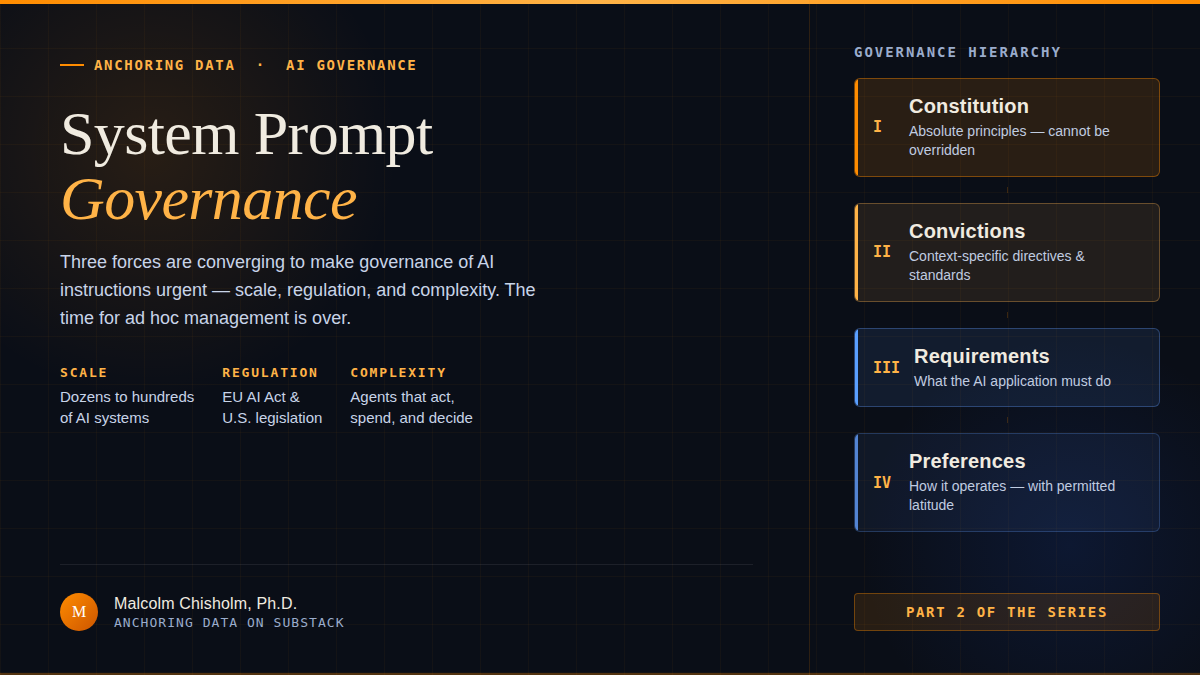

Three forces are converging to make system prompt governance urgent:

Scale: Organizations are moving from a handful of AI experiments to dozens or hundreds of AI-powered systems. At that scale, ad hoc management breaks down completely, and small risks become significant.

Regulation: The EU AI Act, emerging U.S. state legislation, and industry-specific regulations are increasingly requiring organizations to demonstrate oversight and accountability for AI system behavior. “We didn’t know what was in the prompt” will not be an acceptable defense.

Complexity: Modern AI systems don’t just respond to users - they use tools, access databases, trigger workflows, pay for goods and services, and make decisions. The system prompt governing such an agent is not a paragraph of text; it’s an operational control document. Treating it casually is like treating the operating manual for a nuclear reactor casually.

What You Can Do This Week

If you’re a data governance professional, an AI leader, or anyone responsible for AI systems in your organization, here are four concrete steps:

Understand. Learn what system prompts are, why they are so important. Provide

awareness and education on them. Develop principles for their governance.

Take inventory. How many system prompts does your organization have in

production? Who wrote them? When were they last reviewed? Most organizations

cannot answer these questions, and that alone will be alarming when exposed. This will provide more than enough justification for investment in governance.

Pick your highest-risk prompt. Choose the one governing the AI system that

touches the most customers, handles the most sensitive data, or operates in the most regulated context. Assess it against the seven pillars above. The gaps you find will be instructive (at a minimum).

Start the conversation. Share this article with your data governance council,

your AI/ML team, or your risk management colleagues. The most important step is getting the right people in the room and recognizing that system prompts are enterprise assets that deserve even more governance rigor than we apply to data.

Where We Go from Here

A lot more thought needs to go into system prompt governance. There seems to be a spectrum of different contexts that need to be woven into system prompts. At the highest level every organization needs a Constitution that outlines its core principles for AI that cannot be sidestepped. These are absolutes. Then there are convictions that operate in particular contexts, and then requirements for what an AI application is to do, and finally preferences about how it might operate, but preferences that allow some leeway. It is more complex than this, but such a schema is a useful way of thinking about how to govern system prompts.

I’ll be writing more about System Prompt Governance in upcoming editions of Anchoring Data, including detailed breakdowns of each pillar, real-world assessment templates, and practical implementation guides. If this resonates with you, I’d love to hear your perspective — where does your organization stand on prompt governance?

If you’d like to learn more about my background and what led me to write this publication, I invite you to click below to learn more about my journey.