Your AI Has Instructions. Who’s Governing Them? (Part 1)

Why System Prompt Governance Is the Next Frontier — and Why Data Governance Professionals Should Lead It

Every AI system you interact with — every chatbot, every copilot, every autonomous agent — operates under a set of instructions called a system prompt. This prompt tells the AI what it is, what it should do, how it should behave, what it must avoid, and how it should handle situations it wasn’t explicitly designed for.

System prompts are, in effect, executable policy documents for an AI system. They constrain the behavior of the AI system, just as real policies constrain business behavior. They are also the bridge between what an organization wants its AI to do and what the AI actually does.

And right now, almost nobody is governing them.

More Than “Yet Another Ungoverned Asset”

If you’ve spent time in data governance, this situation will feel eerily familiar.

Twenty years ago, most organizations had data everywhere - in databases, spreadsheets, reports, applications — and almost no one was asking fundamental questions: Who owns this data? What does this data element actually mean? How do we know it is accurate? What happens when it changes? Who approved this definition?

Back then, everyone knew data was important. But it was treated more as a byproduct of automation rather than as an asset requiring proactive management.

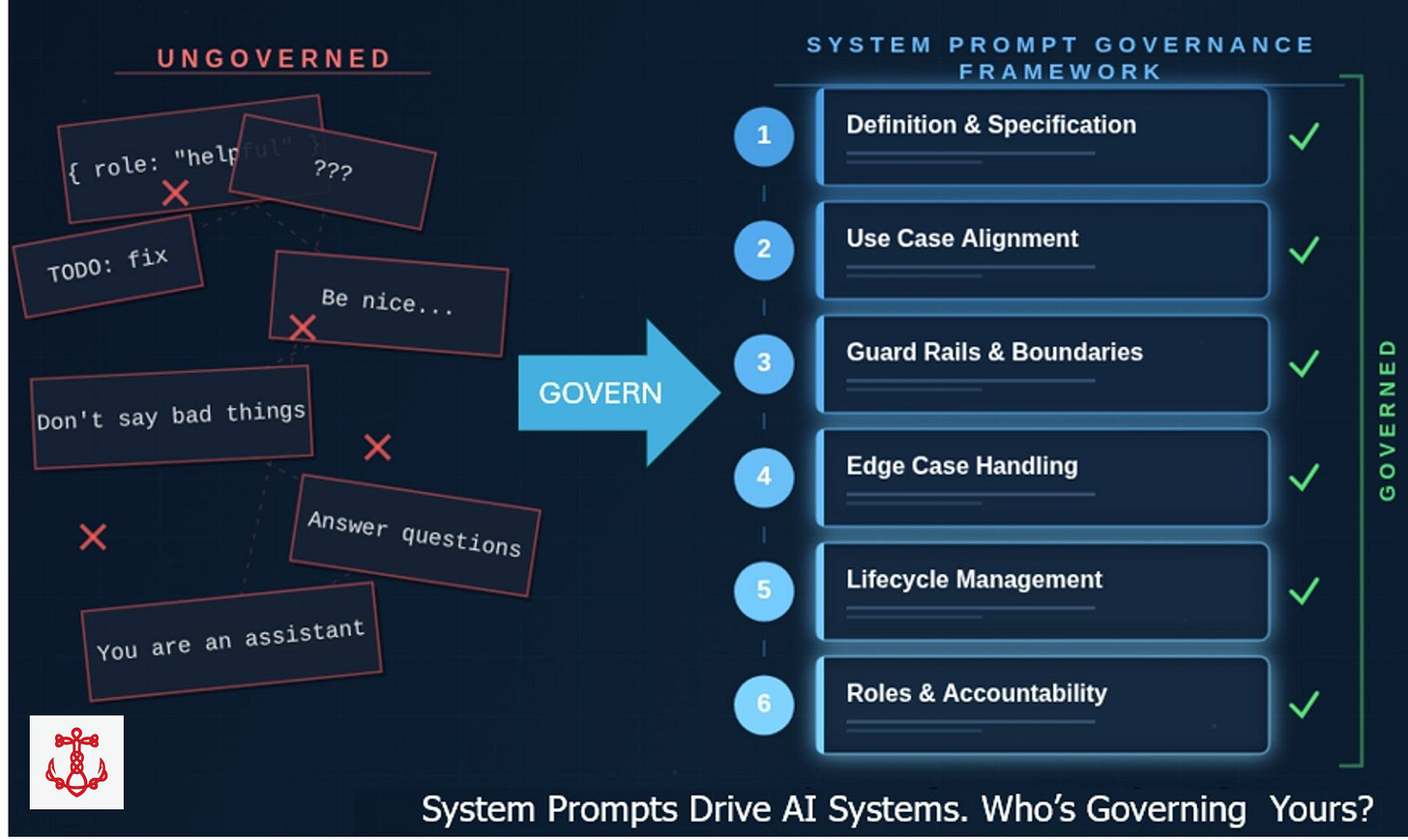

That is where we are with system prompts today. Overwhelmingly, they are being written by developers or other technical staff, deployed into production, and left to run - with no formal ownership, no lifecycle management, no quality standards, no version control discipline, and no systematic handling of edge cases. Most organizations probably do not even know how many system prompts they have in production, let alone whether those prompts are consistent with each other or aligned with business objectives, or use cases, or enterprise AI policies.

This is system prompt sprawl, and it carries real consequences.

What Can Go Wrong (and Already Has)

The risks of ungoverned system prompts are not theoretical. Some examples:

• AI systems making unauthorized promises to customers because the prompt did not explicitly prohibit commitments.

• Chatbots providing regulated advice (financial, medical, legal) without appropriate disclaimers, because nobody with compliance expertise reviewed the prompt.

• Prompt injection attacks that manipulated AI systems into revealing internal instructions or bypassing safety controls, because security boundaries were not included.

• Inconsistent customer experiences across channels because different engineering teams wrote different prompts for similar use cases with no coordination.

• Users being insulted by AI systems because system prompts lacked proper conversational guardrails.

• AI systems that “hallucinated” confidently about topics they should have refused to provide responses on, because the prompt never defined what was out of scope.

Each of these failures traces back to the same root cause: the system prompt was not governed.

What Does “Governing” a System Prompt Actually Mean?

This is the critical question, and it’s where I think many people’s thinking stops too early.

When people hear “prompt governance,” they tend to think of security — preventing jailbreaks and injection attacks. Security is important, but just like in Data Governance it is only one dimension. Genuine prompt governance is much broader. I’ve developed what I’m calling the System Prompt Governance Framework (SPGF), organized around seven pillars:

1. Definition and Specification: Before writing a single word of prompt text, the author need a clear articulation of what the prompt must accomplish, for whom, and why. This means a formal specification document — much like a business requirements document — that captures purpose, target users, functional requirements, success criteria, and critically, precise definitions of key terms. When your prompt says the AI should be “helpful” or “professional” or “brief,” what do those words actually mean in operational terms? Data governance professionals have been fighting this battle around business glossaries for years. The same discipline applies here.

2. Use Case Alignment: Every prompt exists to serve a specific use case. The prompt’s instructions, tone, capabilities, and constraints must be precisely calibrated to the context — not generically written and deployed across different situations. A customer service chatbot for a bank has fundamentally different requirements than an internal knowledge assistant for an engineering team.

3. Guardrails and Boundaries: This encompasses content boundaries (what the AI must not discuss), behavioral boundaries (actions it must not take), regulatory compliance, ethical constraints, and security defenses. Guardrails are not about making the AI less useful – they are about defining the operating envelope within which the AI can be maximally useful without creating risk.

4. Edge Case and Exception Handling: Most prompts are written for the happy path. What happens when a user asks about something outside the AI’s scope? When input is ambiguous? When someone deliberately tries to manipulate the system? When an external tool the AI relies on fails? When a user is in emotional distress? These situations require explicit instructions, not improvisation by the AI.

5. Lifecycle Management: System prompts are living documents. They need version control, change management, testing protocols, deployment processes, monitoring for behavioral drift, and eventually, retirement. A prompt that was appropriate six months ago may no longer be aligned with current business requirements, regulatory changes, or the capabilities of the underlying model.

6. Roles and Accountability: Who owns each prompt? Who is responsible for its quality? Who performs the lifecycle management? Who reviews changes? Who audits the portfolio? Without named accountability, governance is just documentation that nobody reads.

7. Enterprise Level: While there is plenty of governance needed at the individual prompt level, there is also an enterprise level that needs to be put in place. An overall policy for system prompt governance is needed. Education is required, so staff do not confuse system prompt governance with prompt engineering. And inconsistencies between different system prompts cannot be allowed – remember, they are like mini- policies. This required centralized management of a portfolio or catalog of system prompts.

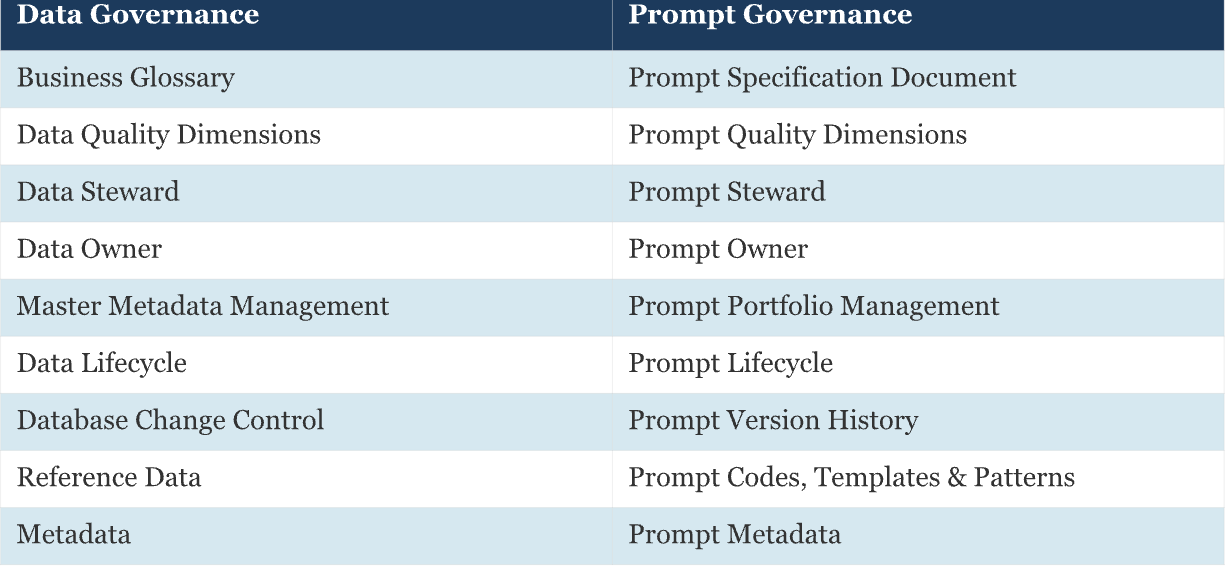

Data Governance Principles Apply Directly

Here’s what excites me most about this space: we don’t need to invent governance from scratch. The principles that data governance professionals have refined over decades map almost perfectly to system prompt governance. Let’s compare them:

The domain of system prompts is new, much of what is required at least rhymes with what data governance has done in the past. Yes, some aspects are genuinely new, but we at least have a start.

A Maturity Model for Where You Stand

I’ve structured a five-level maturity model for prompt governance:

Level 1 — Ad Hoc: Prompts are written by individual developers with no standards or documentation. This is where most organizations are today.

Level 2 — Aware: Basic documentation exists for some prompts. People recognize that prompt quality matters, but processes are informal. Some prompts are shared for best practices.

Level 3 — Defined: Formal governance processes are established. All seven pillars are addressed. Roles are assigned. Lifecycle management is operational.

Level 4 — Managed: Prompt quality is measured quantitatively. Testing is systematic. The prompt portfolio is managed for cross-prompt consistency.

Level 5 — Optimizing: Continuous improvement driven by data. Prompt governance is embedded in organizational culture and contributes measurably to AI performance.

The enterprise level of prompt governance is fully established. Most organizations would honestly assess themselves at Level 1, with some pockets of Level 2. The gap between where they are and where they need to be represents both a risk and an opportunity.

If you’d like to learn more about my background and what led me to write this publication, I invite you to click below to learn more about my journey.